Quizipedia. A web quiz game using Wikipedia content

Posted by jimblackler on Nov 11, 2009

Wikipedia is a fantastic resource. As well as a great encyclopedia, it is a gold mine of information placed in an organized structure. I’ve long been interested in how this could be exploited for applications beyond the encyclopedia; something which is allowed for in site’s Free Documentation license.

It seemed to me that a general knowledge quiz would be a superb alternative use for the data, so I set about to make a Wikipedia quiz, or ‘Quizipedia’. Mining Wikipedia would result in an array of questions from geography, history, entertainment, sports and science; in fact across all areas of human endeavor and study across the globe.

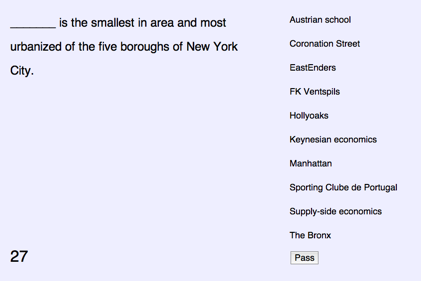

I decided the game would work best in a multiple choice format. The design I chose was to challenge the player to match ten random article names (on the left) against the subject description from the article (on the right). The first sentence of a Wikipedia article usually succinctly describes the subject of the article making it ideal for a quiz question. The player has sixty seconds to complete the task. The player is permitted to ‘pass’ a question, in which case the next question in rotation is shown.

The pass mechanism is in part because the scraper is not perfect. I’d like to think it’s about 90% perfect, but weird or unfair questions can slip through. Allowing the player to pass these questions until they can be answered by elimination means the quiz is not ruined.

The game is here and it is ready to play. However if you’d like to read about how I built it, read on.

There are two parts to the web game. There is a client served using a GWT application served on Google App Engine. This requires a database of questions to work from. Selecting the best questions and effectively scraping them from Wikipedia was the tough part.

Scraper

Using the techniques I worked out for my earlier article I wrote a new scraper to crawl pages from Wikipedia. This was a Java client running on my workstation making HTTP requests and using an sgml processor and XPATH queries to pull out the relevant text. The rate of crawling is no more than 30 articles a minute, which is unlikely to be interpreted as an attack by the web server.

Each page crawl extracts links from the article to used by further searches. It also extracts the opening sentence. All we have to do is strip the name of the article, leaving the reader to guess what is being referred to.

For instance after extraction and stripping bracketed text, the first sentence of the article about the city of Paris reads:

Paris is the capital of France and the country's most populous city.

Simply finding the subject of the article in the opening text, and ‘blanking’ it with underscores can create a feasible question to describe the subject. In this case

______ is the capital of France and the country's most populous city.

The blanking process cannot always be completed. This is because the subject name does not always appear in full in the opening sentence. This means only about 60% of articles can be processed in this way.

In order to maximize the chances of being able to successfully remove the article name from the opening sentence I consider all text strings used to link the article found in the crawl so far. For instance, an article may be linked with the term “United States” or “United States of America”.

Obscurity

One problem I encountered early on was that there is a surprising number of articles on very obscure topics to be found on Wikipedia. Follow the ‘random article’ link and you’ll get a good idea of this. I was happy for the quiz to be about random topics from general knowledge. However to give players a fighting chance they shouldn’t be on topics that they had a reasonable chance of having heard of.

A good signal is the number of ingoing links to the article, and also the number of outgoing links. The latter is the case because topics on popular subjects tend to be well-developed by many editors, and this translates into a lot of links going out. Fortunately both these metrics are available to me during the crawl, allowing me to discard smaller or not well-linked articles.

Alternatives

The multiple choice aspect to the game demands feasible alternatives to each answer. Otherwise it can be obvious which is the correct answer by applying a process of elimination. For instance if the question pertains to a country with particular borders and there is only one country on the list of alternative answers, the correct answer is obvious. I wanted to include in each quiz a number of subjects that could reasonably be confused without close consideration of the question. So if the answer was ‘Paris’, the user may also be presented with ‘Lyon’ or ‘Brussels’.

This was the most challenging part of the scraping process. I investigated a number of ways to discover for a given page typical alternative answers that could be presented. These included sharing a large proportion of incoming or outgoing links. The problem with this is the computation required to match link fingerprints globally – just a few hours of scraping accumulates 5 million links between pages. Fortunately a really good signal turned out to be when the links appear together in the same lists of tables in Wikipedia. There are a surprising number of lists to be found in Wikipedia articles, and they very frequently group articles together of similar type. Identifying where articles appear together in lists and ensuring it is likely that they will be placed together in quizzes makes the game tougher and hopefully more compelling.

That’s a brief description on how I built Quizipedia. I hope you enjoy the game, and if you have observations or feedback please leave it on the comments of this page.